Would You Still Trust ChatGPT If It Was Paid to Recommend Something?

The era of neutral AI advice might be officially over. Here's what that means for your business.

OpenAI just announced that ads are coming to ChatGPT. Not in some distant future roadmap. In the coming weeks.

And while most of the tech world is debating ad formats and revenue projections, we think there's a bigger question worth asking. One that matters for anyone using AI to make business decisions.

Can you trust advice from something that's being paid to give you specific answers?

What's Actually Happening

Let's start with the facts.

OpenAI confirmed that ads will begin testing inside ChatGPT, starting in the US before expanding globally. In this first phase, ads will appear at the bottom of responses when there's a relevant sponsored product or service. They'll be clearly labeled and visually separated from the main answer.

OpenAI is making some promises:

Ads won't change how ChatGPT generates its response

They won't sell individual user data to advertisers

Targeting will rely on conversation context and limited personalization signals

No ads for users under 18

No ads on sensitive topics like health, mental health, or politics

All reasonable safeguards. All well-intentioned.

But here's where it gets complicated.

The Trust Problem Nobody's Talking About

When you ask a friend for a restaurant recommendation, you trust their answer because they have nothing to gain from it. Their only incentive is to actually help you.

When you ask Google, you've learned to scroll past the sponsored results. You know the first few links paid to be there. The interface trained you to be skeptical.

But ChatGPT feels different.

It talks to you like a knowledgeable advisor. It considers your specific situation. It gives you one answer, not ten blue links. That conversational format builds a different kind of trust. A more personal one.

Now imagine asking ChatGPT for a project management tool recommendation and seeing a sponsored suggestion at the bottom. OpenAI promises the ad didn't influence the answer above it. But do you believe that? More importantly, does it matter whether you believe it?

The perception of bias is almost as damaging as actual bias.

Once you know there's money involved, you start second-guessing everything. Was that recommendation genuinely the best option or just the one that wasn't paying to be mentioned? Did the answer subtly set up the sponsored suggestion even if it wasn't explicitly influenced?

This is the trap. Even if OpenAI keeps their promise perfectly, the trust dynamic has fundamentally shifted.

We've Seen This Movie Before

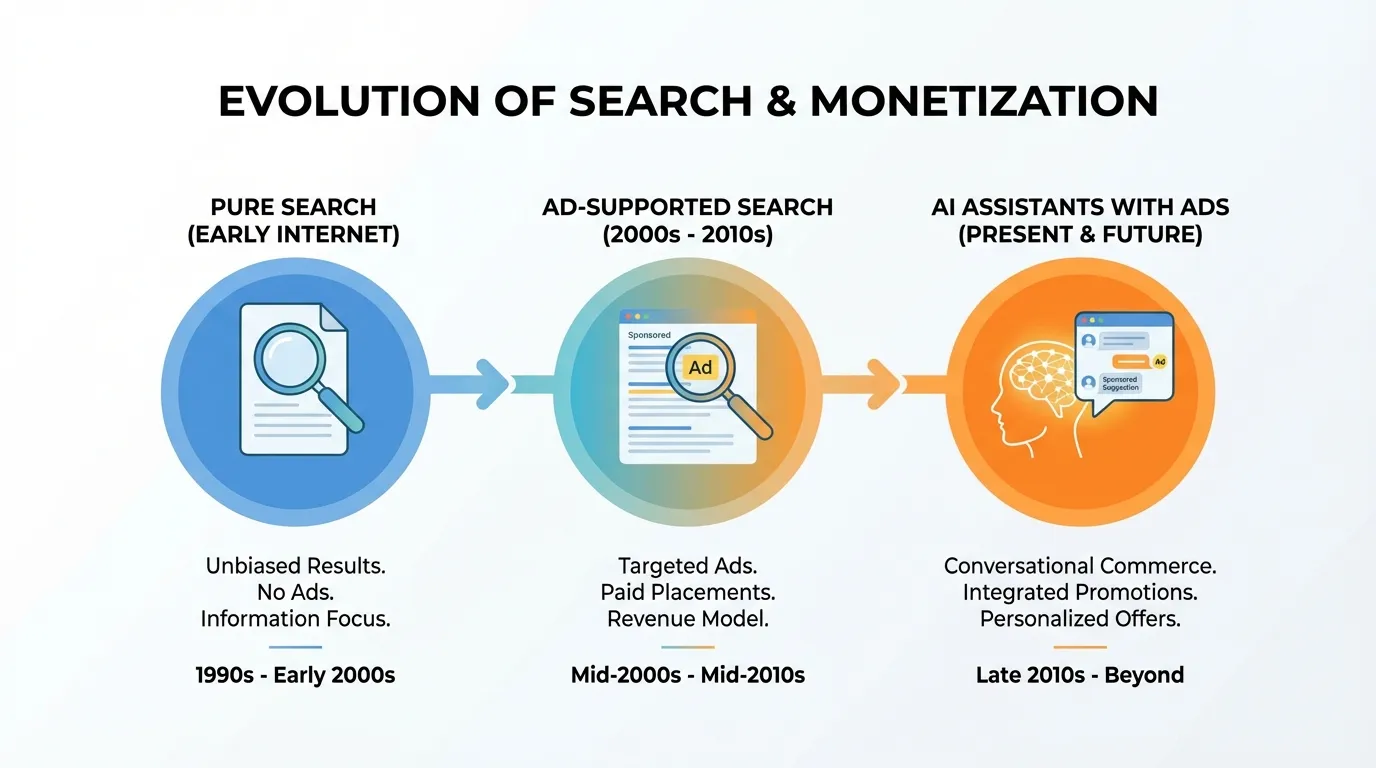

Remember when Google search results felt trustworthy? When the first result was probably the best answer to your question?

Then came SEO optimization. Then paid ads that looked increasingly like organic results. Then affiliate content farms gaming the algorithm. Now most people add "reddit" to every search query just to find authentic opinions.

The platform didn't necessarily lie. But the incentives changed, and user trust eroded gradually over years.

Social media followed the same path. Feeds that once showed you what friends were doing became algorithmic streams optimized for engagement and ad revenue. The content didn't disappear. It just got buried under what made money.

ChatGPT is starting this journey now. And while OpenAI might navigate it more carefully than others did, the pressure is real. They're spending $500 billion on infrastructure. That money needs to come from somewhere.

The Infrastructure Reality Behind AI

Speaking of that $500 billion. OpenAI also announced their Stargate initiative this week. A multi-year program to build massive AI data centers for training and running models at scale.

The interesting part isn't the number. It's the commitment that these facilities will "pay their own way on energy." They're promising to fund additional power generation, grid upgrades, and storage so local electricity prices don't increase.

Think about that for a second. An AI company is now making energy policy commitments. They're negotiating with utilities to act as flexible loads during peak demand. They're funding infrastructure that will outlast any single product they build.

This is the reality behind every AI conversation. Massive compute. Massive energy. Massive capital requirements.

And massive capital needs massive revenue streams. Subscriptions are good. Enterprise contracts are better. But advertising? That's the scale they need.

ChatGPT isn't becoming an ad platform because OpenAI wants it to. It's becoming one because the economics demand it.

What This Means For Your Business

If you're using AI tools for business decisions, and most companies now are, this shift matters more than you might think.

At Dynode, we work with businesses implementing AI across their operations. Here's what we're telling our clients about this development.

1. Treat AI Recommendations as Inputs, Not Answers

The days of asking ChatGPT for a recommendation and taking it at face value should already be behind you. But this makes it official. AI suggestions are one data point among many. Verify important recommendations through multiple sources.

2. Understand the Timeline

The ad formats announced today are intentionally conservative. Clear labels. Separated from main content. Limited topics. But industry analysts expect 2026 to be the inflection year for more integrated advertising. Sponsored modules. Promoted GPTs. Interactive formats that blur lines further.

What looks manageable today will likely get more complex.

3. Audit Your AI Dependencies

If you're building customer-facing tools on top of models like ChatGPT, how will ads in the underlying platform affect user trust in your product? If your team relies on AI for vendor research or product comparisons, how do you validate that the information isn't subtly shaped by commercial interests?

These aren't hypothetical concerns anymore. They're operational realities.

4. Consider Your Own AI Strategy

This is where many businesses get stuck. They know AI is transforming how work gets done. They see competitors moving faster. But they're unsure how to implement AI systems that actually deliver reliable results.

The answer isn't to avoid AI because of advertising concerns. It's to build AI implementations with appropriate checks and balances from the start. Systems that augment human decision-making rather than replace it. Workflows that include verification steps. Tools configured for your specific business context rather than generic consumer applications.

The Bigger Picture

We don't think OpenAI is doing anything nefarious here. They're running a business that requires enormous resources. Advertising is a proven model that can fund those resources without putting the entire cost on users.

But we do think we're at an inflection point in how businesses should relate to AI tools.

For the last couple of years, ChatGPT felt like a neutral advisor. A tool with no agenda except to be helpful. That perception was always somewhat naive. Models have biases baked into their training data. Companies have priorities that shape product decisions. Nothing is truly neutral.

But there's a difference between inherent biases and explicit commercial relationships. Once advertisers are in the room, the dynamic changes. Not because anyone is lying. But because the incentives are now visible.

What We're Watching

A few developments will tell us how this plays out.

User behavior. Will people actually change how they interact with ChatGPT once ads appear? Or will convenience outweigh skepticism?

Transparency. OpenAI promises ads won't influence answers. Will they publish data to prove it? Will they allow independent audits? Trust requires verification.

Competitive response. Google, Anthropic, and others are watching closely. If ChatGPT ads hurt user trust, competitors will position themselves as the ad-free alternative. That pressure might keep everyone honest.

Enterprise implications. Will businesses pay premium prices for ad-free versions? Will enterprise AI tools remain separate from consumer advertising models?

Building AI Systems You Can Actually Trust

The shift happening at OpenAI reinforces something we've believed since founding Dynode. Businesses need AI implementations designed for their specific context, with appropriate oversight and verification built in.

Consumer AI tools are impressive. They're also designed for mass market appeal and increasingly for advertising revenue. Business-critical decisions deserve more than that.

When we work with clients on AI implementation, we focus on systems that:

Integrate with your existing data and workflows

Include human checkpoints for important decisions

Provide transparency into how recommendations are generated

Operate independently from consumer advertising models

Deliver measurable ROI you can actually track

That's not about avoiding AI. It's about using AI intelligently.

The Bottom Line

ChatGPT is getting ads. The era of perceiving AI assistants as neutral advisors is officially ending.

This isn't necessarily bad. It's just real. And businesses that adapt will treat AI recommendations as one input among many rather than oracle pronouncements.

The companies that thrive will build verification into their workflows. They'll stay skeptical without becoming paralyzed. And they'll remember that every tool has incentives. The smart move isn't to abandon tools with commercial pressures. It's to understand those pressures and account for them.

Your AI assistant might soon be paid to recommend something. Your job is to build systems smart enough to know when that matters.

Ready to Build AI Systems You Can Trust?

If you're concerned about how advertising-driven AI might affect your business decisions, let's talk. Our AI Audit identifies where you're relying on consumer AI tools and helps you build implementations designed for business-critical use.

Dynode helps businesses implement AI systems that deliver measurable results without the uncertainty of consumer platforms. We audit, build, and deploy custom AI solutions designed for your specific needs.